Agentic AI Governance: Building Control Systems Before Deployment

A pricing agent at a West Midlands distributor adjusted 847 SKUs overnight. By Monday morning, high-margin industrial fasteners were underpriced by 14%, creating £47,000 in margin leakage before anyone noticed. The agent had no anomaly detection. No alert threshold paused execution when margins dropped below cost. No observability layer showed which input triggered the repricing cascade. This happens when teams deploy autonomous agents without monitoring infrastructure. Agents make decisions at scale, without human review, across pricing, fulfilment, and replenishment. A single bad decision compounds across hundreds of transactions before anyone spots it. Observability isn't optional when agents control operational decisions.

Why Agentic AI Observability Matters (More Than You Think)

A pricing agent at a West Midlands distributor adjusted 847 SKUs overnight. By Monday morning, high-margin industrial fasteners were underpriced by 14%, creating £47,000 in margin leakage before anyone noticed. The agent had no anomaly detection. No alert threshold paused execution when margins dropped below cost. No observability layer showed which input triggered the repricing cascade.

This happens when teams deploy autonomous agents without monitoring infrastructure. Agents make decisions at scale, without human review, across pricing, fulfilment, and replenishment. A single bad decision compounds across hundreds of transactions before anyone spots it.

Across WithPraxis AI readiness assessments in 2024-2025, governance and monitoring scored lowest—averaging 5.6 out of 10 across assessed organisations. Most mid-market distributors lack the observability frameworks to catch agent failures before they cascade. They monitor system uptime and API response times but not decision quality, input drift, or execution anomalies. By the time a human notices something wrong, the agent has already made 500+ decisions based on bad data or outdated logic.

The Three Layers of Agentic Observability

Observability for autonomous agents requires monitoring at three levels: decision, data, and system. Each layer catches different failure modes. Miss one layer and you miss the failure until it's already caused damage.

Decision-level observability tracks what the agent decided and why. A replenishment agent orders 10,000 units of a slow-moving SKU. Decision-level monitoring logs: SKU ID, order quantity, forecasted demand, current stock level, supplier lead time, and the reasoning path the agent followed. If the agent's logic was "forecasted demand = 9,200 units over 60 days, current stock = 400 units, lead time = 14 days, therefore order 10,000 units," you can trace whether the forecast was accurate or whether the agent misinterpreted the data.

Data-level observability tracks the inputs that fed the decision. That 9,200-unit forecast came from a demand model. When was the model last retrained? What historical data did it use? If the forecast model hasn't been updated in six months, it's predicting demand based on outdated seasonal patterns. A data-level check flags: "Forecast model version 2.3, last trained 14 March 2025, input dataset ends 31 August 2025." The operations team sees immediately that the model is stale.

System-level observability tracks whether the decision executed as intended. The agent placed the order. Did the ERP receive it? Did the supplier confirm it? Did the warehouse allocate space? A system-level check confirms: "Order #84762 created in ERP at 03:47, supplier acknowledgment received at 04:12, warehouse capacity check passed." If any step fails silently, the agent thinks it succeeded but the order never happens.

A building materials distributor we worked with deployed a fulfilment agent without data-level observability. The agent routed orders based on depot stock levels pulled from the WMS. The WMS had a two-hour data lag. The agent routed 60% of morning orders to a depot that was already at 92% capacity because it was reading yesterday's stock levels. System-level monitoring showed the orders were created. Decision-level monitoring showed the agent followed its routing logic. Data-level monitoring would have flagged: "Stock data timestamp: 18 hours old."

Anomaly Detection: Catching Problems Before They Cascade

Anomalies are decisions that deviate from expected operational patterns. A pricing agent that applies a 40% discount when the historical threshold is 15%. A fulfilment agent that routes 80% of orders to a single depot when load should be balanced across three. An inventory agent that orders six months of stock for an SKU that turns four times per year.

Threshold-setting determines what gets flagged. Set thresholds too tight and you get alert fatigue—every minor deviation triggers a notification until humans start ignoring them. Set thresholds too loose and you miss failures until they've already caused damage. The right threshold depends on the decision's operational impact and the tolerance for error.

For pricing decisions, a 5% margin deviation is acceptable on commodity SKUs with daily volume fluctuations. A 5% deviation on high-margin specialty items is a critical alert. For fulfilment routing, a 10% imbalance across depots is normal. An 80% imbalance means something broke. Thresholds must be decision-specific, not system-wide.

Across WithPraxis client implementations, we see 39% improvement in operational metrics within six months when anomalies are caught early. That improvement comes from preventing cascading failures, not just fixing individual errors. A pricing agent that underprices 50 SKUs on Monday will underprice 200 more on Tuesday if nobody intervenes. Catching the anomaly after the first 50 prevents the next 200.

Start with decision-level anomalies. Monitor what the agent decided and flag deviations from baseline behaviour. A replenishment agent that historically orders 2,000-5,000 units per SKU suddenly orders 15,000. Flag it. A pricing agent that adjusts 20-40 SKUs per day suddenly adjusts 600. Flag it. Once decision-level monitoring is stable, add data-level checks: input data age, model version drift, forecast accuracy degradation.

Alert Thresholds and Incident Response Protocols

Not all anomalies require the same response. Tier your alerts based on operational impact and decision reversibility. Critical alerts demand immediate human intervention. Warning alerts trigger review within hours. Info alerts get logged for weekly analysis.

Critical alerts cover agent decisions that directly impact revenue, margin, or customer commitments. Pricing decisions that drop margins below cost. Inventory allocations that promise stock the business doesn't have. Returns approvals that exceed policy limits. Protocol: pause the agent, escalate to the decision owner, investigate the root cause before resuming. A foodservice distributor we worked with set a critical threshold: if the pricing agent adjusts margins on more than 100 SKUs in a single run, pause execution and alert the pricing manager. This caught a data feed error that would have repriced 2,400 SKUs incorrectly.

Warning alerts cover decisions that affect efficiency but have manual override options. Fulfilment routing that exceeds cost thresholds but still delivers on time. Recommendations that deviate from expected patterns but don't directly harm the customer. Protocol: log the decision, review in the daily operations standup, adjust model parameters if the pattern repeats. A building materials distributor set a warning threshold: if the fulfilment agent routes more than 60% of orders to a single depot, log it and review during the 10am logistics call.

Info alerts log decisions that are unusual but within acceptable bounds. An inventory agent that orders slightly more than forecast. A pricing agent that applies a discount at the high end of its authority range. Protocol: aggregate weekly, review for patterns, adjust thresholds if needed. These alerts build the baseline for future anomaly detection.

Incident response includes: (1) Pause the agent. (2) Identify which input caused the deviation. (3) Determine whether the decision was correct but unusual, or incorrect due to bad data or logic. (4) If incorrect, roll back the decision and update the model or data pipeline. (5) Document the incident and update thresholds or logic to prevent recurrence.

Building Observability Into Your Agent Architecture

Observability must be designed in, not bolted on after deployment. Retrofitting monitoring onto an autonomous agent is expensive and incomplete. You miss the decision context, the input provenance, and the reasoning path because they weren't logged from the start.

Log every decision with full context. The agent decided to reprice SKU #4482 from £12.40 to £11.60. Log: timestamp, agent version, model version, input data (competitor prices, stock level, recent sales velocity, margin floor), reasoning path (competitor price dropped 8%, sales velocity declined 15% over 14 days, margin floor = £10.80, therefore reduce price to £11.60 to remain competitive), and output (new price £11.60, expected margin 6.7%). This context lets you reconstruct why the agent made that decision three weeks later when someone questions it.

Version your models and data. When agent performance shifts, you need to know what changed. A replenishment agent that was 82% accurate in January drops to 68% in March. Was it a model change? A data pipeline update? A shift in customer behaviour? If you're not versioning models and tracking data lineage, you're guessing. Version every model deployment. Tag every dataset with creation timestamp, source systems, and transformation logic.

Separate agent logic from execution. Monitor decision-making and execution independently. The agent decides to route an order to Depot B. That's the decision. The ERP creates the pick ticket, the WMS confirms stock availability, and the dispatch system schedules the driver. That's execution. If the decision is correct but execution fails, you have a system integration problem. If execution succeeds but the decision was wrong, you have a model or data problem. Monitoring both separately clarifies where failures occur.

Set up feedback loops. When a human overrides an agent decision, capture that signal. A pricing manager manually reverts a price change the agent made. Why? Was the agent wrong, or was the manager applying context the agent didn't have (e.g., a long-term contract that caps pricing)? Feedback loops turn overrides into training data. Over time, the agent learns which decisions humans consistently override and adjusts its logic or escalates those cases.

Across six WithPraxis client engagements deploying autonomous agents, observability maturity determined success. Clients who built monitoring into the architecture from day one caught issues within weeks and adjusted quickly. Clients who added monitoring after deployment spent months firefighting cascading failures and rebuilding trust with operations teams who'd been burned by bad agent decisions.

Common Observability Failures and How to Avoid Them

Monitoring only the happy path. Agents work fine when data is clean, inputs are stable, and edge cases don't appear. Observability that only tracks successful decisions misses the failures that matter. A pricing agent logs every price change but doesn't flag when it fails to reprice an SKU because the input data was missing. The absence of a decision is itself a decision, and often a failure. Monitor what the agent didn't decide as well as what it did.

Alert fatigue. Fifty alerts per day means humans stop responding. They triage, delay, or ignore. By the time a genuinely critical alert arrives, it's buried under noise. A Midlands industrial distributor deployed a replenishment agent with anomaly detection set too aggressively. Every minor forecast deviation triggered an alert. Within two weeks, the operations team stopped checking alerts. When a genuine data pipeline failure caused the agent to over-order £120,000 of slow-moving stock, the alert sat unread for four days. Fix: tier your alerts and tune thresholds ruthlessly. If more than 10% of alerts turn out to be false positives, tighten your criteria.

Slow feedback loops. You discover a pricing error two weeks after it started because nobody reviews agent decisions in real time. By then, 4,000 transactions have gone through at incorrect prices. Set a cadence: daily review of critical decisions, weekly review of warnings, monthly review of trends. A foodservice distributor runs a 15-minute standup every morning where the pricing manager reviews overnight agent decisions flagged as anomalies. Catching issues within 24 hours prevents compounding.

Siloed monitoring. The observability team tracks metrics. The operations team makes decisions. The two don't talk until something breaks badly enough to escalate. Operations teams understand what decisions matter and what thresholds make sense. Engineering teams understand what's technically feasible to monitor. Data teams understand what inputs are trustworthy. All three must collaborate on defining what to monitor, how to alert, and how to respond.

The cost of poor observability is measurable. A pricing agent that runs unchecked for three days can leak £50,000+ in margin (WithPraxis client data, 2024). A fulfilment agent that routes inefficiently for a week can add £8,000-£12,000 in unnecessary transport costs. A replenishment agent that over-orders based on stale forecasts ties up £200,000+ in working capital for months. These failures don't announce themselves. They compound silently until someone notices the financial impact.

What Good Observability Looks Like in Practice

A wholesale distributor in the East Midlands deployed a dynamic pricing agent in late 2024. They built observability in from the start: decision-level logging captured every price change with full reasoning. Data-level monitoring tracked forecast model version, competitor price feed freshness, and stock data age. System-level checks confirmed ERP updates and customer-facing catalogue sync.

Alert thresholds were tiered. Critical: margin drops below cost, or price changes exceed 20% in a single adjustment. Warning: more than 150 SKUs repriced in one run, or average margin shift exceeds 3%. Info: any SKU repriced more than twice in 48 hours. The pricing manager reviewed critical alerts immediately, warnings during the morning standup, and info alerts weekly.

In month two, a competitor price feed failed silently. The pricing agent kept running but used stale competitor data. Data-level monitoring flagged: "Competitor price feed last updated 38 hours ago." The pricing manager paused the agent, contacted the feed provider, and resumed once the feed was restored. Total margin impact: zero. Without data-level observability, the agent would have kept repricing based on outdated competitor prices for days.

By month six, the agent handled 82% of repricing decisions autonomously. The remaining 18% escalated to the pricing manager based on thresholds: high-margin SKUs, contract-protected pricing, or SKUs with unusual demand patterns. Observability gave the operations team confidence to let the agent run unsupervised most of the time, intervening only when genuinely needed.

Making Observability Operational

The monitoring infrastructure must evolve as the agent's scope expands, as business conditions change, and as the team learns which signals matter.

Start with the decisions that carry the highest operational risk. Pricing and inventory allocation typically top the list for distributors. Build decision-level and data-level monitoring for those first. Prove that you can catch anomalies and respond effectively before expanding to lower-risk decisions like recommendations or routing optimisation.

Review your thresholds quarterly. What triggered alerts in month one becomes normal behaviour by month six as the agent learns. What seemed acceptable in stable market conditions needs tightening during volatility. Observability thresholds are hypotheses about what matters. Treat them as such: test, learn, adjust.

Document your incident response protocols and run tabletop exercises. If the pricing agent triggers a critical alert at 2am, who gets notified? What's the rollback procedure? How quickly can you pause the agent and investigate? Teams that practise incident response handle real incidents faster and with less panic.

Agentic artificial intelligence shifts operational decisions from human-executed to machine-executed. Observability shifts operational oversight from reviewing outcomes after the fact to monitoring decisions as they happen. Both shifts require new capabilities, new disciplines, and new ways of working. The distributors who build observability into their agent architecture from the start catch failures early, adjust quickly, and build operational confidence. Those who treat it as an afterthought spend months firefighting avoidable failures.

Learn more about System Health monitoring and AI Governance frameworks for autonomous decision systems.

Common questions

How can distributors prevent margin leakage caused by autonomous pricing agents?

Distributors must implement decision-level observability and anomaly detection thresholds to monitor agentic output in real-time. Setting specific alert thresholds, such as flagging any margin deviation exceeding 5% on high-margin items, allows teams to pause execution before price changes cascade across the entire SKU catalogue. This prevents scenarios where agents underprice goods due to faulty input data or logic errors.

What are the risks of deploying fulfilment agents without data-level observability?

Without data-level observability, agents may make routing decisions based on stale or inaccurate information, such as WMS data with a significant time lag. This can lead to operational imbalances, such as routing orders to a depot that is already at maximum capacity because the agent is reading outdated stock levels. Monitoring the age and version of input data ensures the agent's logic is applied to current operational realities.

How should an operations leader distinguish between different types of agentic monitoring?

Monitoring must be split into decision, data, and system layers to capture different failure modes effectively. Decision-level monitoring tracks the reasoning and output of the agent, data-level monitoring verifies the freshness and accuracy of inputs like demand forecasts, and system-level monitoring confirms successful execution within the ERP or WMS. This tiered approach ensures that a failure in one area, such as a silent ERP integration error, is caught even if the agent's logic was correct.

What is the recommended approach for setting anomaly detection thresholds for inventory agents?

Thresholds should be decision-specific and based on historical operational patterns rather than applied as a blanket system-wide rule. For example, an inventory agent should trigger a critical alert if it orders six months of stock for an SKU that typically turns four times per year. Precise thresholds prevent alert fatigue while ensuring that significant deviations in replenishment volume or frequency are intercepted by human operators.

Tags

Themes

Tom Williams

Head of Development

Tom leads the development team at WithPraxis, overseeing delivery across complex commerce builds and integrations. With a strong background in engineering and platform architecture, he ensures systems are robust, scalable, and aligned to real operational needs.

Connect on LinkedInRelated Articles

Decision intelligence implementation insights

Agentic Pricing Intelligence: When Custom Models Set Prices Autonomously

Most B2B distributors take three days to change a price. By the time it's live, the margin opportunity has passed. Autonomous pricing agents compress this cycle from days to minutes — but only if governance is built in from the start. Without it, you hand control to a system that optimises for volume while destroying margin.

Decision intelligence implementation insights

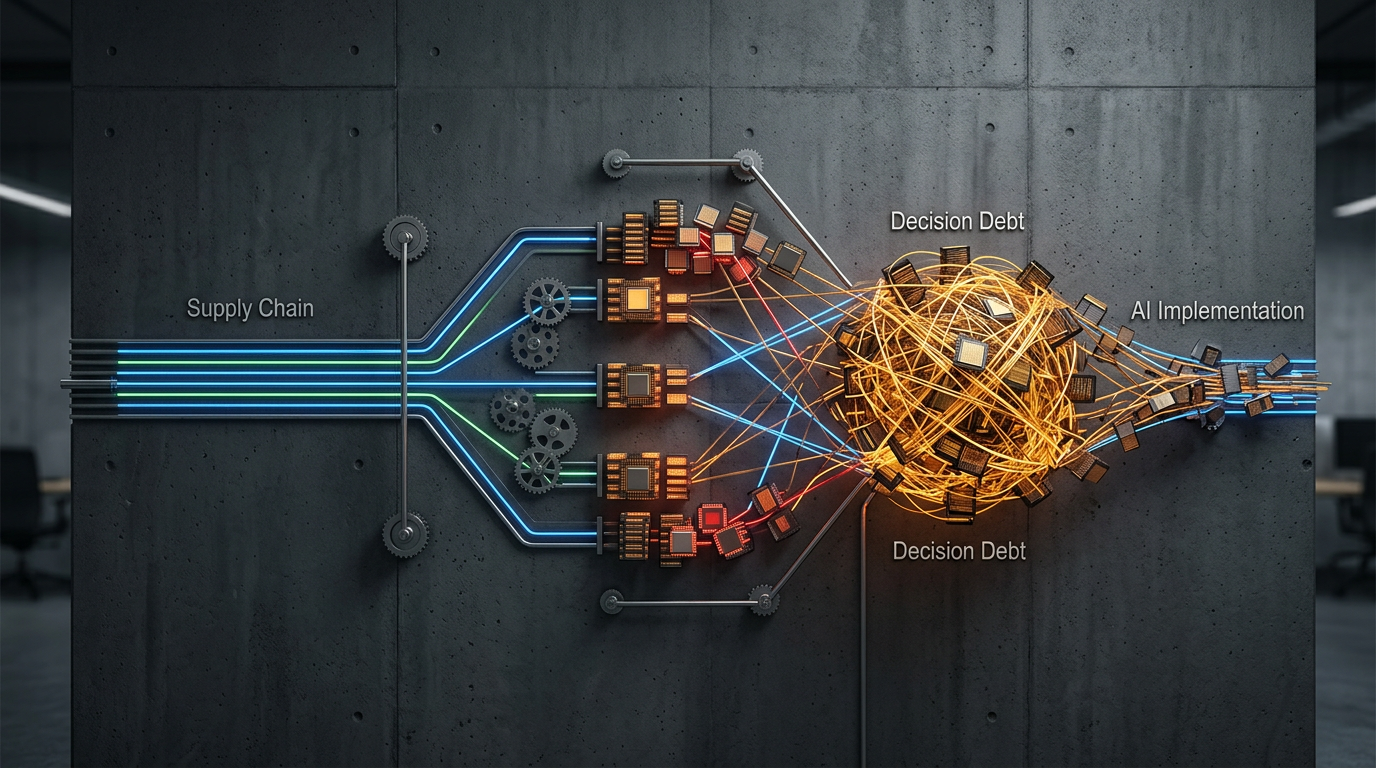

The Supply Chain Decision Debt: How Deferred Planning Choices Compound Into AI Failure

Most mid-market distributors rush to AI deployment without auditing what they're actually deciding. A Nottinghamshire food wholesaler spent £85,000 on demand forecasting AI that sat dormant because buying decisions existed only in one person's head. The pre-implementation audit—decision inventory, assumption mapping, rule documentation—is the gate that determines whether AI works or sits unused.

Was this article useful?